by Laksh Krishnamurthy, Chief Technology Officer, Autonomize AI

Andrej Karpathy built something fascinating over a weekend: a small “LLM Council” prototype that pretty much captures both the promise and the problem of AI in enterprise settings. It’s a lightweight multi-model orchestration system where several LLMs answer a query independently, cross-review each other’s answers, and then a final “chair” model synthesizes the best response.

The thing is incredibly elegant. Just a few hundred lines of Python and JavaScript. Simple FastAPI backend, React/Vite frontend, basic JSON file storage.

And what Karpathy demonstrates is actually kind of profound: multi-model workflows aren’t that hard to build. The design is LLM-agnostic, using a model broker that makes models plug-and-play. Swap one out, plug another in. Really beautifully simple.

But here’s where it gets interesting. That simplicity in a weekend project? It exposes all the complexity in the real world. What’s missing from the prototype:

- No authentication or access control

- No audit logs, no governance, no PII/PHI controls

- No retry or fallback logic

- No reliability guarantees or uptime monitoring

- No enterprise integration

- Not production-safe for regulated industries

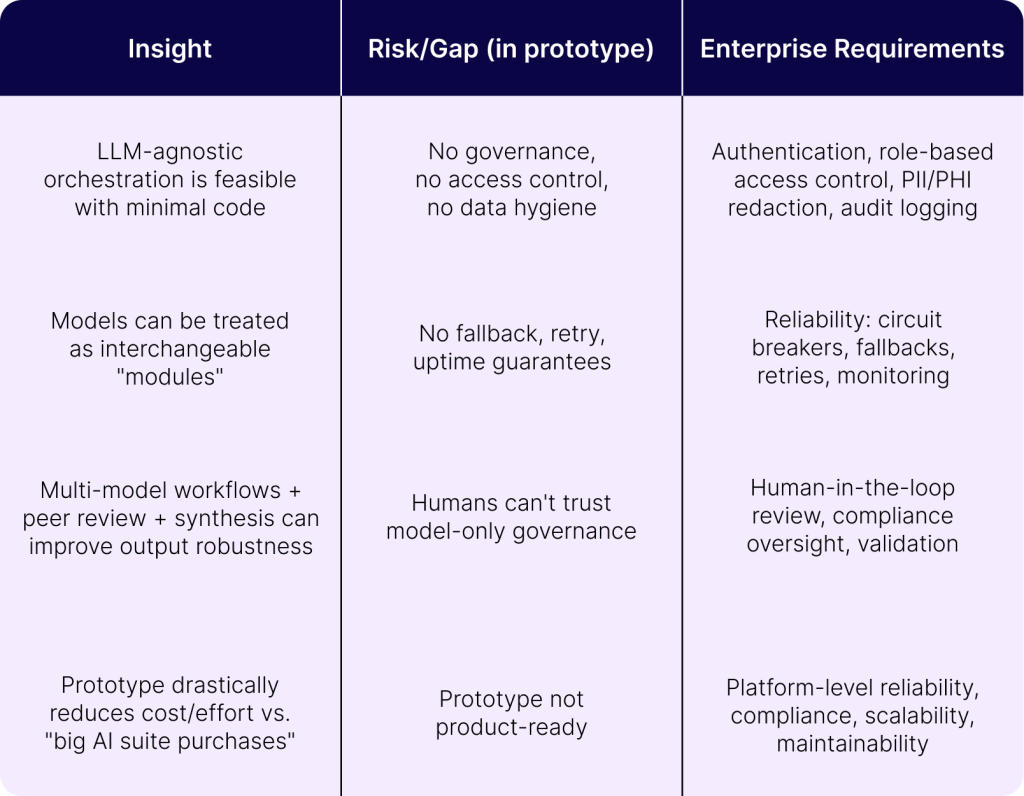

That gap between what works as a demo and what actually works in production? That’s the entire point. The prototype exposes what we call the “missing layer” in the AI stack. It’s the enterprise orchestration, governance, and reliability layer that sits between raw models and real business workflows. And that layer? That’s not trivial.

Here’s how the gaps break down strategically:

How Autonomize AI Makes This Enterprise-Ready

This is exactly the problem Autonomize already solves. The “LLM Council” demonstrates clever model routing, which is great. We’ve taken that concept and extended it into a healthcare-grade agentic orchestration platform. Because you have to.

The model-agnostic flexibility that made Karpathy’s prototype elegant? That’s core to how we operate. We treat LLMs, SLMs, domain-specific models as modular components. Adding or removing models is a configuration change, not an engineering project. But, and this is critical, we’ve wrapped that flexibility in an enterprise governance layer. Built-in PHI and PII redaction, HIPAA-aligned policies, comprehensive audit trails, role-based access controls. The full compliance posture: SOC2, payer-grade, provider-grade controls. Exactly the scaffolding the prototype completely lacks.

Where the weekend project handles text-in, text-out scenarios, Autonomize orchestrates across the actual messy reality of healthcare systems. Structured claims data, clinical records, PDFs, EHRs, utilization management and care management systems, care-quality engines, PBM platforms, external data sources. Multi-agent chains handle validation, evidence retrieval, rule checking, decision support. Across all of it. It’s a different game entirely.

And the reliability piece? This matters enormously in production. Autonomize provides retries, fallbacks, circuit breakers, monitoring, guardrails. The unglamorous infrastructure that ensures workflows don’t just break when a model times out or an API runs slow. For prior authorization, care management, claims processing, HEDIS workflows? This isn’t optional. It’s the difference between a demo and a system that clinicians and payers actually trust with real patients and real decisions.

We’ve also built the integration layer that makes orchestration practical in the first place. Connectors to enterprise systems, SFTP, message queues, APIs, data lakes. The prototype exists in isolation, which is fine for a weekend project. But Autonomize works inside real payer and provider ecosystems. And we provide end-to-end observability: lineage tracking, versioning, data provenance, workflow tracing. Enterprises can actually see why a decision happened and which models or agents contributed to it. That’s not a nice-to-have.

Why This Matters

Look, Karpathy’s project is a clever sketch of what’s possible. Autonomize is what makes it deployable, compliant, and operational at scale.

The article essentially proves our thesis: Model routing is easy. Enterprise orchestration is the real product.

The gap between a weekend prototype and an enterprise platform isn’t about the models themselves. It’s about everything that has to surround them to make AI safe, reliable, auditable, and genuinely useful in regulated industries. That’s the layer we’ve built. And honestly, it’s the layer that turns promising AI concepts into systems that actually work when there’s real money and real patients on the line.